Q.What do you mean by serverless ?

The serverless architecture is a way to build and run applications and services without having to manage the infrastructure behind it. Your application still runs on servers, of course, but the server management is done by AWS.

You no longer have to provision, scale, and maintain services to run your applications, databases, or storage systems. With the serverless architecture, you can execute your code only when needed, and scale automatically from a few requests per day to thousands of requests per second. And you only pay for the compute time you consume. There is no charge when your code’s not running. You can run code for virtually any type of application or back end service, all with zero administration.

So, why use serverless? Well, serverless enables you to build modern applications with increased agility and lower total cost of ownership. Building serverless applications means that your developers can focus on their core product, instead of worrying about managing and operating servers, or runtime, either in the cloud, or on premises.

So, what are the benefits of serverless? Serverless has four main benefits.

- One, no server management. There’s no need to provision, or maintain any servers. There’s no software or runtime to install, maintain, or administer. All of that is taken care of for you.

- Two, flexible scaling. Your application’s going to be scaled automatically by adjusting its capacity through toggling the units of consumption. For example, throughput or memory, rather than units of individual servers.

- Three, you pay for value. You pay for a consistent throughput, or execution duration, rather than by the server unit.

- Four, Automated high availability. Serverless provides built-in availability and fault tolerance. You don’t need an architect for these capabilities since the servers running the application provide them by default.

Amazon Lex :

Q. What is a chat bot?

The term itself is derived from chat robot. Why would we need a chat robot? If you think about a chat interaction you may have had with a support team, think how long you waited in line to get a simple query answered, the tome taken to answer your simple query could have been automated. However, this machine needs to be highly engaging, and provide conversational experiences through texts or even voice.

I need a bot that can take both text and may send over a chat in Facebook, Messenger, Slack, or via SMS or an audio input. If the input method is text, the machine can start parsing what I have typed. However, if it’s voice, the bot needs to take that input and recognize what was said to transfer the audio to text.

Q. Why is audio to text tough?

It needs to act as a human to parse that text, to recognize the intent of the text. It needs to deduce what the speaker actually means, and not just the words that they say here. That is what we call “natural-language understanding,” or NLU.

Q. How to convert text to speech ?

Amazon Polly is a text to speech service that uses advanced deep learning technologies to synthesize speech that sounds like a human voice.

While Lex integrates with Polly automatically, when the user interacts with Lex via speech, it sends this text to synthesize to speech to Polly, which in turn returns an audio file, that is then sent back to the user.

Q. What is flow of Amazon Lex?

A few basic terminologies used are :

- An intent is a particular goal that the user wants to achieve. So, the user sends a few utterances.

- The utterances are things like, “I’d like to book a hotel.” So, the utterance is sent into the bot. The bot will now look at part of all of its utterances and will try to match it to one of those intents.

- Next, we don’t have all of the information to be able to book a hotel. So, we need to start asking a few more questions toward the user. Well, those questions are sent via something we call a “prompt.”

- The prompt can be,”Sure, which city?”, will ask the user for that question. The user will now answer that question by saying, “Chicago” And then, that will fill something that we call a “slot.”

- A slot is data that the user must provide to fulfill the intent. They are required slots.

- And then, the bot will need to have the answer to every one of the slots to be able to fulfill this entire intent. Once all of the required slots have been filled, it is now time to ask the user.

- It’s time to fulfill this bot. The fulfillment is the business logic required to fulfill your entire goal, your intent, here.

You can also find the Error Handling section. In the case where the bot can’t match the ask of a user to an utterance in an intent, it will retry twice, as you see it right here. By sending the clarification prompt, that you can see at the top here. “Sorry, what can I help you with?” You can add more prompts, and it will randomly select one of them, which makes the bot seem a lot more human. In the case where it can’t understand what the user wants to either do by not being able to match it with an utterance in an intent or when a user doesn’t provide a valid answer to a prompt, one of the hang-up phrases will be used. In this case, it’s, “Sorry, I am not able to assist at this time.”

You don’t need to specify each one of the possibilities as Lex utilizes natural language understanding deep learning algorithms to expand those sample utterances. Sometimes though, you may need to add a few more utterances that the model doesn’t associate properly. For example, Book a Car intent you can use three utterances, which i can think of

- make a car reservation,

- reserve a car,

- book a car.

There are many built-in slot types, and all will start with the amazon prompt, as you see there. This particular one will take a date entered by a user and transform it to a date that machines can understand. For example, using “tomorrow” and transforming that to 2019-02-21. You can also specify to restrict values to only the ones you add Restrict to slot values and synonyms in Slot types.

Q.Why are those versions required?

It’s to create immutable versions of your bot. You may have a bot version you use for dev, beta, and prod. They may each use a different version of intent, and those intents may use a different version of a slot type.

I could point my application to this latest version and be done with it, but if I make any modifications, what do you think happens? I’m impacting my users, which isn’t great for customer satisfaction. So, I want my application to point to a version. That would mean that each time I modify my bot I need to redeploy my application? That sounds pretty bad. Yeah, that would be.

That’s why you shouldn’t do that. Instead, you should use an alias. I’ll click Publish. It asks me to create an alias version or update an alias version. An alias points to a version, so you can still only have one deployment of your code. Let me create a new alias. I’ll call that “prod” and hit the Publish button. Well, by clicking Publish, it creates a new version – version 1. And an alias name “prod” is created, pointing to version 1 of our bot.

Now, I can go in my application and make it point to the prod alias, without having to worry about making changes to the bot as the only way to impact the application will be to publish the bot again and then change the prod alias to point to that new version. That’s a lot of mistakes in a row, if you were to have that problem.

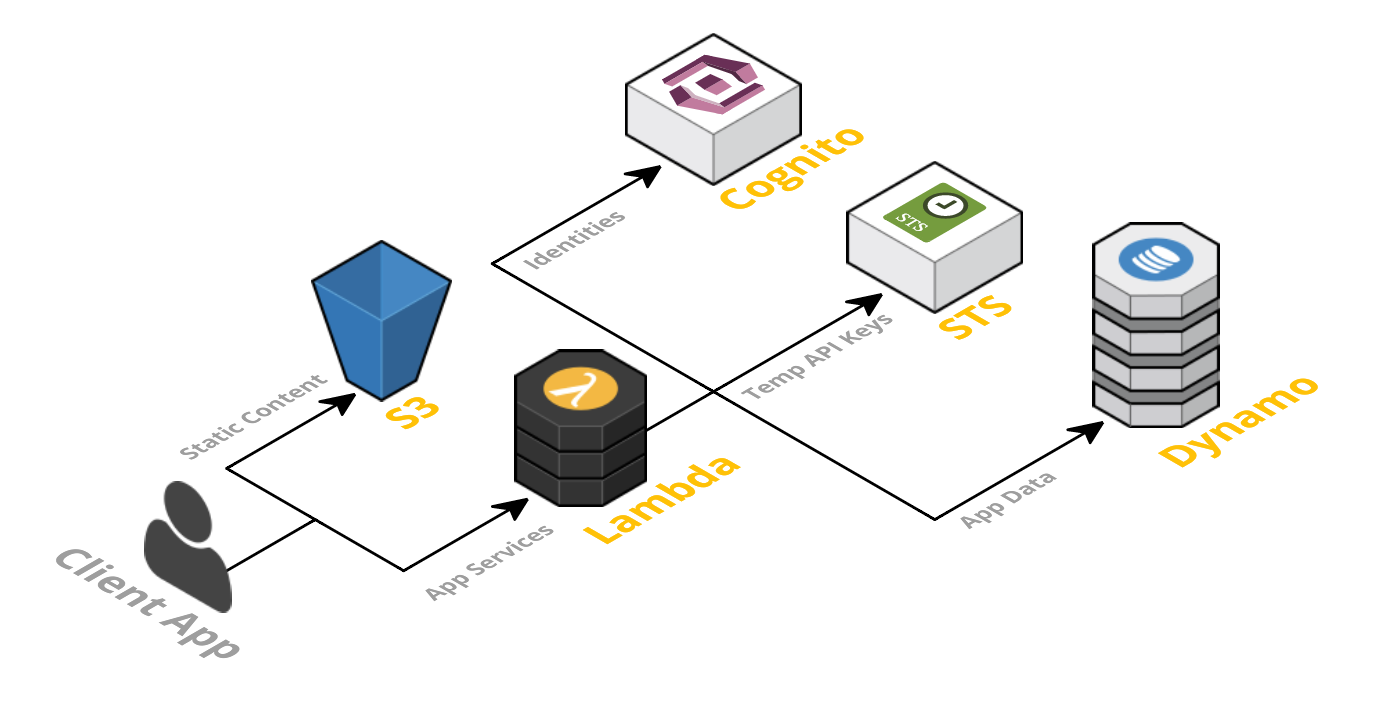

Creating a Serverless Website with Amazon S3 :

Amazon S3 provides easy-to-use management features so you can organize your data and configure finely tuned access controls to meet your specific business, organizational, and compliance requirements.

One of the biggest advantages for using S3 is that you can host your website from an S3 bucket and reduce stress on origin or on your actual data center or AWS Cloud. This also minimizes your “attack surface” for your site adding in an additional layer of security. It’s cost-effective because it’s a frugal method for storage and website hosting, and you’re also reducing traffic to your origin site so you don’t have to pay for more web servers by scaling vertically or horizontally. It works to help with your security by reducing your attack surface by not sending traffic to your infrastructure or origin.

You just can’t use server-side processing code, like .NET or PHP. You can use a client-side script, like JavaScript. You can still set up your website to interact with other AWS services, like DynamoDB or API Gateway, and pull dynamic information by passing variables using query strings.

Instead of using the website endpoint for S3, you can also bring your own domain name – such as example.com – to serve your content. Amazon S3, along with Amazon Route 53, supports hosting a website at the root domain.

- Click on Create bucket

- You can see your bucket, click on it.

- You need to do is go to Properties. Even though I made it open to the public, I want to say that this is going to be a static website. Because if do not, no one’s going to be able to get to it still.

- You have to identify my index document or the main client side page.

- Also have an error.html or a error side page , if you wanted to have redirects, you can do that.

- If you want to take this site offline, you can always disable that website hosting.

- Click Save on that, go back to Overview.

- Now we’re actually going to upload our website.

But this is how easy it is, and because this website is hosted on S3, I don’t have to worry about, is it going to be resilient? Because it is resilient, because it’s a managed service from AWS. It’s going to be cost-effective, again, because it is a managed service from AWS. And it’s secure, because it’s a managed service from AWS. So this is a great way that I can have a public-facing website and not have to worry about servers in the back end, scaling up or down.

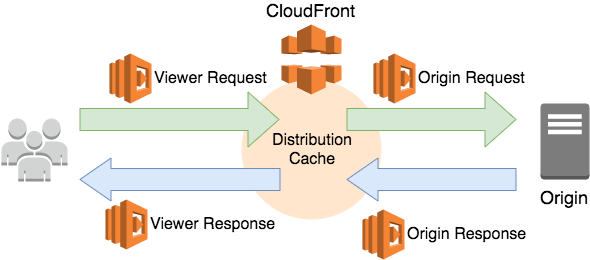

Introduction to Amazon Cloud Front:

Amazon Cloud Front speeds up content delivery by leveraging its global network of data centers, known as “edge locations.” Cloud Front has edge servers and locations all around the world.

There are no longer requests traversing the globe to get our content. Instead, requests are routed to the least latent edge location that is the closest in terms of delivery speed. Cloud Front then serves cached content quickly and directly to the user nearby. If content is not yet cached, with an edge server, Cloud Front retrieves it from the S3 bucket origin. And because the content traverses the AWS private network instead of public internet, and Cloud Front optimizes the TCP handshake, the request and content return is still so much faster than crossing the public internet.

By using Cloud Front, we can set up additional access restrictions, like GO restrictions, signed URLs, and signed cookies, to further constrain access to the content following different criteria.

Another security feature of Cloud Front is origin access identity, or OAI. Let’s take a look. OAI restricts access to an S3 bucket and its content to only Cloud Front in operations that Cloud Front performs. Cloud Front includes additional protection against malicious exploits. To provide these safeguards, Cloud Front integrates with both AWS WAF, a web application firewall that helps protect web applications from common web exploits, and AWS Shield, a managed DDoS protection service for web applications running on AWS.

- AWS WAF lets you control access to your content based on conditions that you specify. For example, IP addresses or the query string on a content request. Cloud Front then responds with either the requested content, if the conditions are met, or with an HTTP 403 forbidden error.

- All Cloud Front customers benefit from the automatic protection of AWS Shield standard at no additional charge.

Introduction to Amazon API Gateway:

So API Gateway is a fully managed service that’s designed to make it easy for developers to create, publish, maintain, and monitor secure application programming interfaces, or APIs, at any scale. With a few clicks in the AWS Management Console, you can create REST and Web Socket APIs that act as a front door for your applications to access data, business logic, or other functionality from your back end services. Like workloads, running on EC2, and web applications. API Gateway also provides an easy interface for code running on AWS Lambda and other AWS services,

Q. Why would you want to use API Gateway?

Well, turns out that software development organizations are moving more towards micro service architectures. Micro services are an approach to software development where software is composed of small independent services that communicate over well-defined APIs. Micro services architectures make applications easier to scale and faster to develop. And that really enables innovation and accelerates the time-to-market for new features.

Q. Why would you want to use it?

Well, developers can add headers, map input variables from POST events, to something your target application needs.Here are several more reasons you might want to use API Gateway.

- Efficient API development: You can run multiple versions of the same API simultaneously with API Gateway. This allows you to quickly iterate, test, and release new versions.

- Easy monitoring: You can monitor performance of metrics and information – on API calls, data latency, error rates – from the API Gateway dashboard, which allows you to see millions of requests in a single pane of glass.

- Performance: You can provide end users with the lowest possible latency for API requests and responses, by taking advantage of our global network of edge locations using Amazon Cloud Front. You can also throttle traffic and cache the output of API calls, to ensure that the back end operations withstand traffic spikes, and back end systems are not unnecessarily called.

- Cost savings: API Gateway provides a tiered pricing model for API requests. You can decrease your costs based on the number of API requests you make, per Region, across your AWS accounts.

- Flexible security controls: You can authorize access to your APIs with AWS Identity and Access Management, or IAM, as well as Amazon Cognito. If you use OAuth tokens or other authorization mechanisms, API Gateway can help you verify incoming requests as well. By providing an extra layer in front of your back end service, you can add extra layers to protection and security as well.

Mock Integrations in API Gateway

Amazon API Gateway supports mock integrations for API methods. This feature enables API developers to generate API responses from API Gateway directly, without the need for an integration backend. As an API developer, you can use this feature to unblock dependent teams that need to work with an API before the project development is complete. You can also use this feature to provision a landing page for your API, which can provide an overview of and navigation to your API. For an example of such a landing page, see the integration request and response of the GET method on the root resource of the example API discussed in Build an API Gateway API from an Example.

Origin Access Identity (OAI)

Origin Access Identity provides a method to restrict access to S3 content to only a CloudFront Distribution.

I’m not that much of a online reader to be honest but your blogs really nice, keep it up! I’ll go ahead and bookmark your website to come back later on. Cheers

LikeLiked by 1 person