Services and applications typically create logs that contain significant amounts of information. This information is logged and stored on persistent storage, allowing it to be reviewed and analyzed at any time.

By monitoring the data within your logs, you can quickly identify potential issues you want to be made aware of as soon as they occur. Resolving an incident as quickly as possible is paramount for developing real-life solutions.

Having more data about how your environment is running far outweighs the disadvantage of needing more information, especially when it matters to your business in the case of incidents and security breaches.

Some logs can be monitored in real-time, allowing automatic responses to be carried out depending on the data contents of the log. Logs often contain vast amounts of metadata, including date stamps and source information such as IP addresses or usernames.

This blog aims to help you understand how to store and analyze logs in AWS and some recommended practices.

Storing and analyzing logs in AWS can be done using various services and tools, depending on your requirements and use case. Here are some services you can consider:

A. AWS CloudWatch

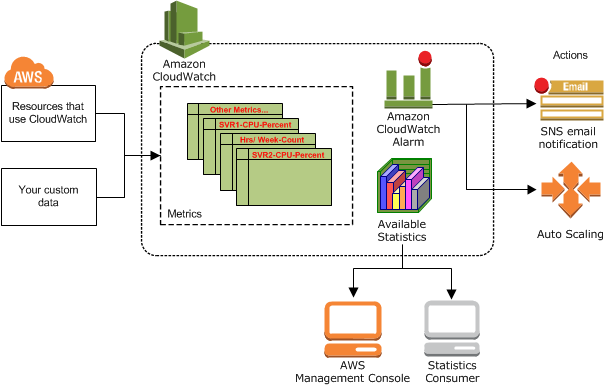

AWS CloudWatch provides valuable insights into the health and performance of your applications and resources, which can help you optimize their performance, increase availability, and improve the overall customer experience. Various components of Amazon CloudWatch include:

1. Dashboards: You can quickly and easily design different dashboards to represent the data by building your views. For example, you can view all performance metrics and alarms from resources relating to a specific customer.

Once you have built your Dashboards, you can easily share them with other users, even those who may not have access to your AWS account.

Note: The resources within your customised dashboard can be from multiple different regions.

2. Metrics: You can monitor a specific element of an application or resource over time, for example, the number of DiskReadson in an EC2 instance.

Anomaly detection allows CloudWatch to implement machine learning algorithms against your metric data to help detect any activity generally expected outside the normal baseline parameters.

Note: Different services will offer different metrics.

3. Amazon CloudWatch Alarms: You can implement automatic actions based on specific thresholds that you can configure relating to each metric.

For example, you could set an alarm to activate an auto-scaling operation if your CPU utilization of an EC2 instance peaked at 75% for more than 2 minutes.

There are three different states for any alarm associated with a metric,

- OK – The metric is within the defined configured threshold.

- ALARM – The metric has exceeded the thresholds set.

- INSUFFICIENT_DATA – There is insufficient data for the metric to determine the alarm state.

4. CloudWatch EventBridge: You connect applications to various targets, allowing you to implement real-time monitoring and respond to events in your application.

The significant benefit of using CloudWatch EventBridge is that it allows you to implement the event-driven architecture in a real-time decoupled environment.

Various elements of this feature include:

- Rules: A rule acts as a filter for incoming streams of event traffic and then routes these events to the appropriate target defined within the rule. The target must be in the same region.

- Targets: Targets are where the Rules direct events, such as AWS Lambda, SQS, Kinesis, or SNS. All events received are in JSON format.

- Event Buses: It receives the event from your applications, and your rules are associated with a specific event bus.

5. CloudWatch Logs: You have a centralized location to store your logs from different AWS services that provide logs as an output, such as CloudTrail, EC2, VPC Flow logs, etc., in addition to your own applications.

An added advantage of CloudWatch logs comes with the installation of the Unified CloudWatch Agent, which can collect logs and additional metric data from EC2 instances as well as from on-premise services running either a Linux or Windows operating system. This metric data is in addition to the default EC2 metrics that CloudWatch automatically configures for you.

Various types of insights within CloudWatch include Log Insights, Container Insights, and Lambda Insights.

B. AWS Cloud Trail

AWS CloudTrail records and tracks all AWS API requests. It captures an API request made by a user as an event and logs it to a file it stores on S3.

CloudTrail captures additional identifying information for every event, including the requester’s identity, the initiation timestamp, and the source IP address.

You can use the data captured by CloudTrail to help you enhance your AWS environment in several ways.

- Security analysis tool.

- Help resolve and manage day-to-day operational issues and problems.

C. AWS COnfig

AWS Config records and captures resource changes within your environment, allowing you to perform several actions against the data that help optimize resource management in the cloud.

AWS Config can track changes made to a resource and store the information, including metadata, in a Configuration Item (CI) file. This file can also serve as a resource inventory.

It can provide information on who made the change and when through AWS CloudTrail integration. AWS CloudTrail is used with AWS Config to help you identify who made the change and when and with which API.

Note: AWS Config is region specific.

D. AWS Cloud front logs

Enabling CloudFront access logs allows you to track each user’s request for accessing your website and distribution. These logs contain information about the requests made to your CloudFront distributions, such as the request’s date and time, the requester’s IP address, the URL path, and the response’s status code.

Amazon S3 stores these logs, similar to S3 access logs, providing a durable and persistent storage solution. Although enabling logging is free, S3 will charge you for the storage used.

E. AWS VPC Flow logs

VPC Flow Logs allow you to capture IP traffic information between the network interfaces of resources within your VPC. This data aids in resolving network communication incidents and monitoring security by detecting prohibited traffic destinations.

Note: VPC Flow Logs do not store data in S3 but transmit data to CloudWatch logs.

Before creating VPC Flow Logs, you should know some limitations that may affect their implementation or configuration.

- If you have a VPC peered connection, you can only view flow logs of peered VPCs within the same account.

- You cannot modify its configuration once you create a VPC Flow Log. Instead, you need to delete it and create a new one to make changes.

You need an IAM role with the appropriate permissions to send your flow log data to a CloudWatch log group. You select this role during the setup configuration of VPC Flow Logs.

Recommended practices for storing and analyzing logs in AWS:

By following these best practices, you can effectively store and analyze logs in AWS and improve the reliability and performance of your applications.

- Define a consistent logging format such as JSON or Apache Log Format.

- Use log rotation to prevent from taking up too much storage space. You can use AWS Elastic Beanstalk helps to automate log rotation.

- Set up alerts to notify you of any critical issues in your logs to quickly resolve any issues. Amazon CloudWatch helps to set up alerts based on predefined thresholds or custom metrics.

- Use encryption to protect your log data in transit and at rest. Amazon S3 server-side encryption or Amazon CloudFront field-level encryption helps protect your data,

- You should regularly review and analyze your logs to help identify potential issues. AWS services like Amazon Athena, Amazon Elasticsearch Service, and AWS Glue help you gain insights.

- Use a log aggregation service to centralize your logs across many AWS services. AWS CloudTrail or Amazon CloudWatch Logs helps to centralize your logs.

- Implement a data retention policy that specifies how long you need to keep your log data. To help avoid unnecessary storage costs and ensure compliance with regulatory requirements.

By monitoring the data within your logs, you can quickly identify potential issues you want to be made aware of as soon as they occur. In addition, by combining this monitoring of logs with thresholds and alerts, you can receive automatic notifications of potential issues, threats, and incidents, before they become production issues.

By logging what’s happening within your applications, network, and other cloud infrastructure, you can build a performance baseline and establish what’s routine and what isn’t.